Communication System

Information Theory and Error Control coding

Practice questions from Information Theory and Error Control coding.

6

Total0

Attempted0

Correct0

IncorrectConsider an additive white Gaussian noise (AWGN) channel with bandwidth and noise power spectral density . Let denote the average transmit power constraint.

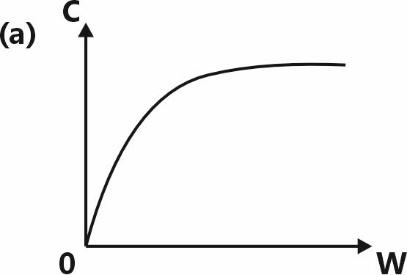

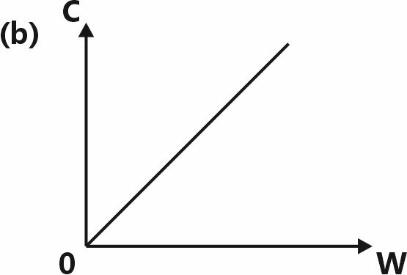

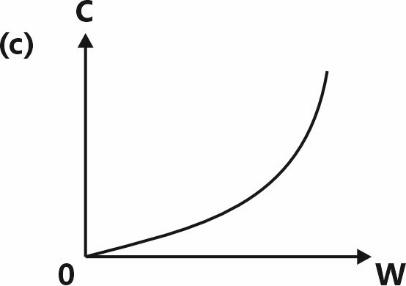

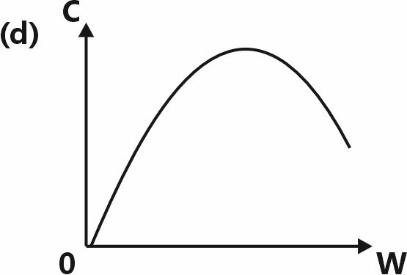

Which one of the following plots illustrates the dependence of the channel capacity on the bandwidth (keeping and fixed)?

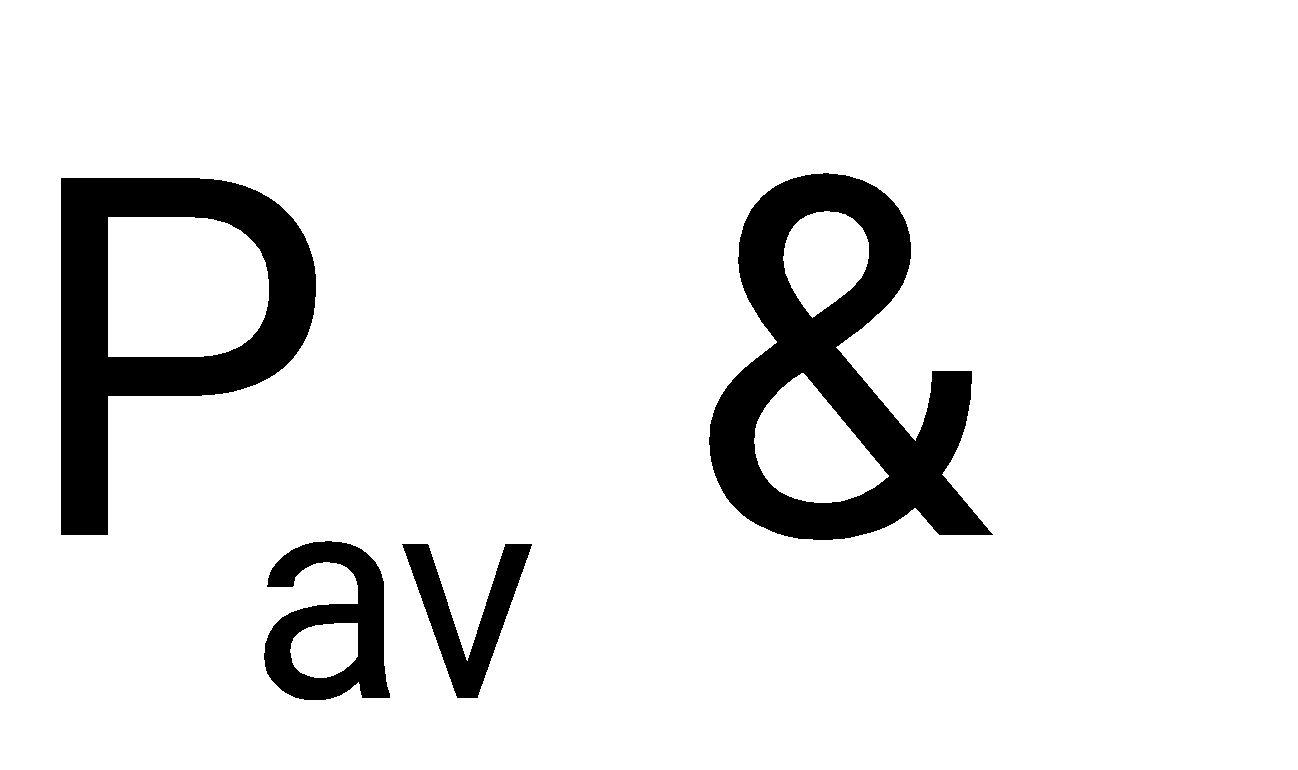

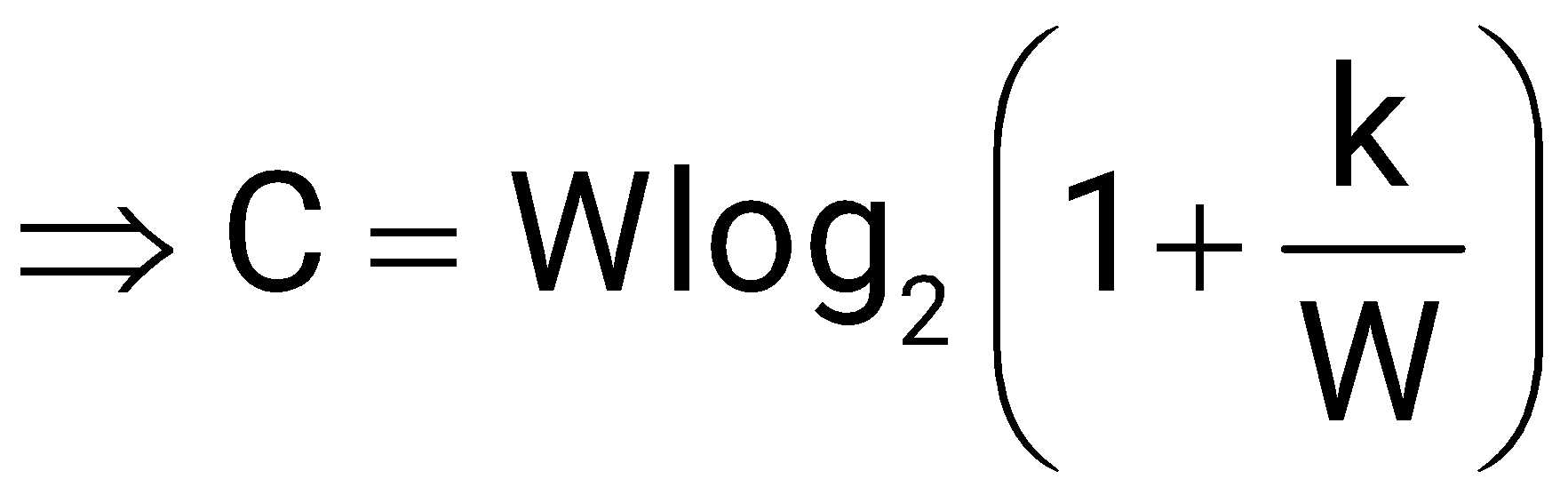

By Shanon's Channel Capacity Theorem,

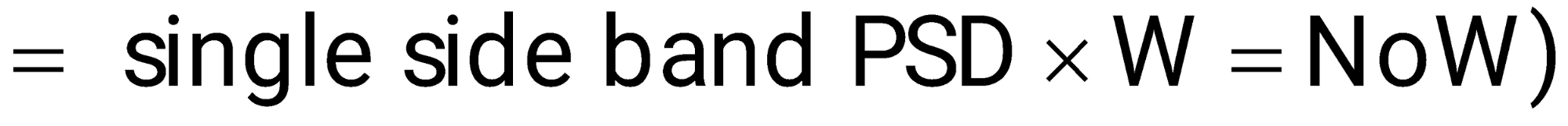

Noise Power (double side band PSD) .

And given No are constant,

constant

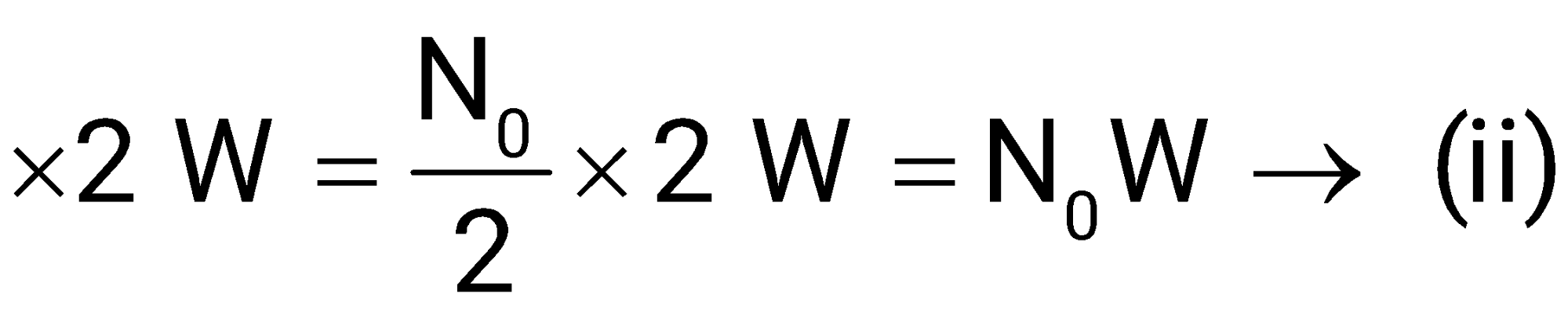

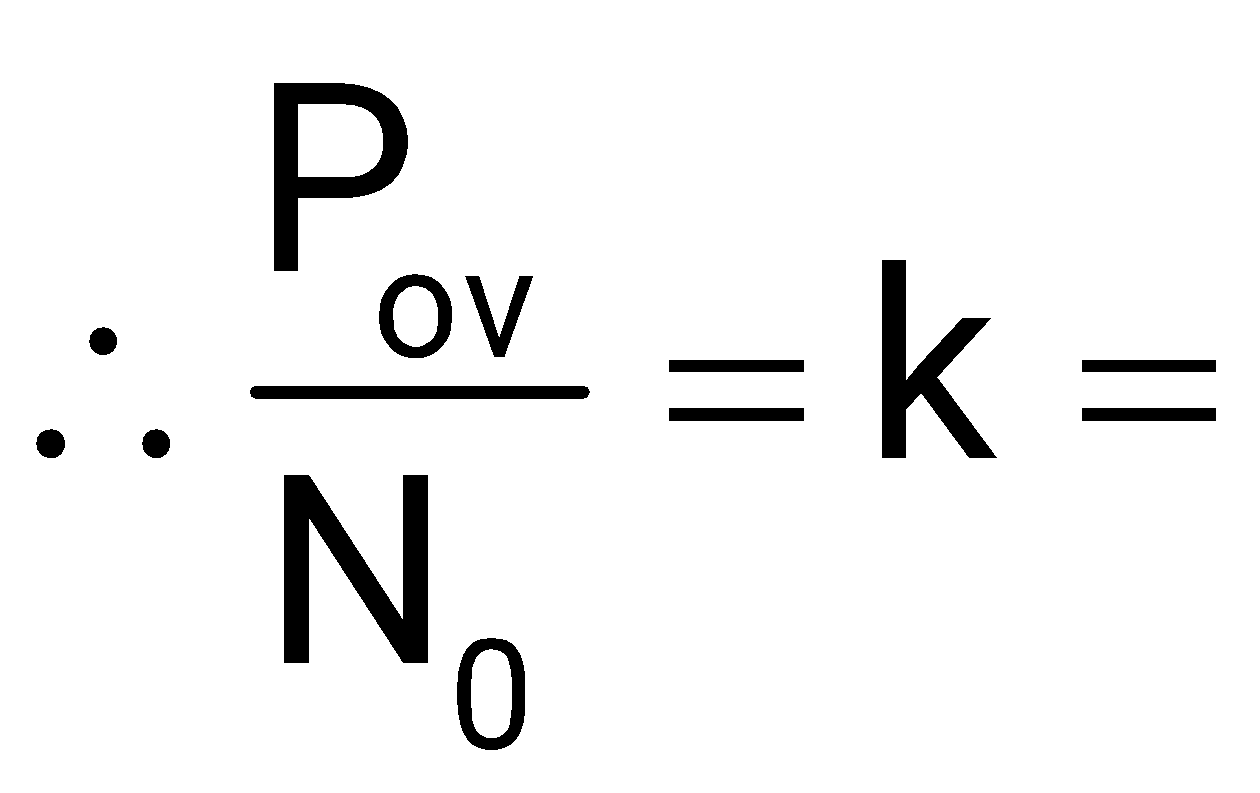

Putting (ii) & (iii) in (i)

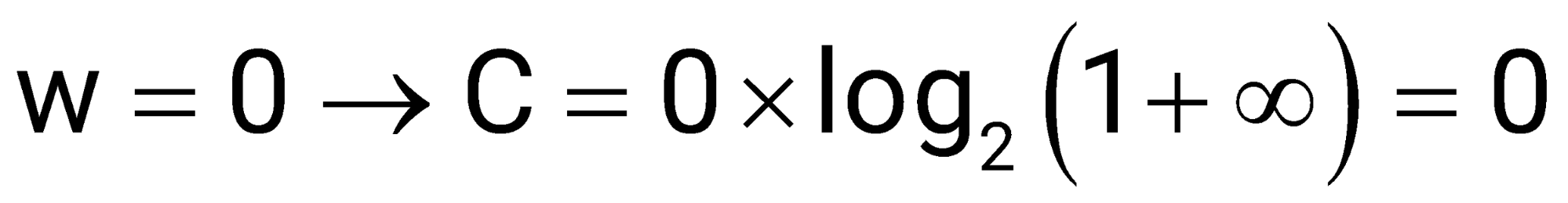

Taking

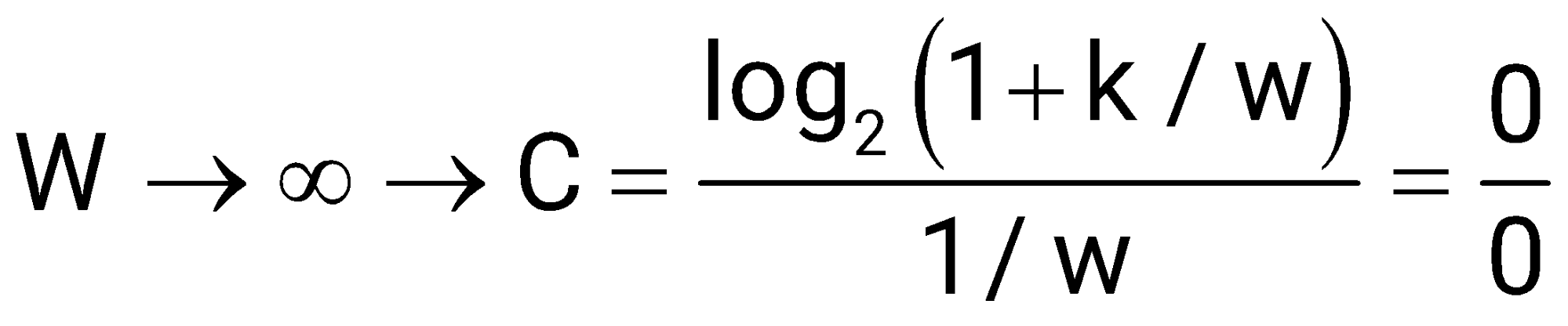

limit (note the limit)

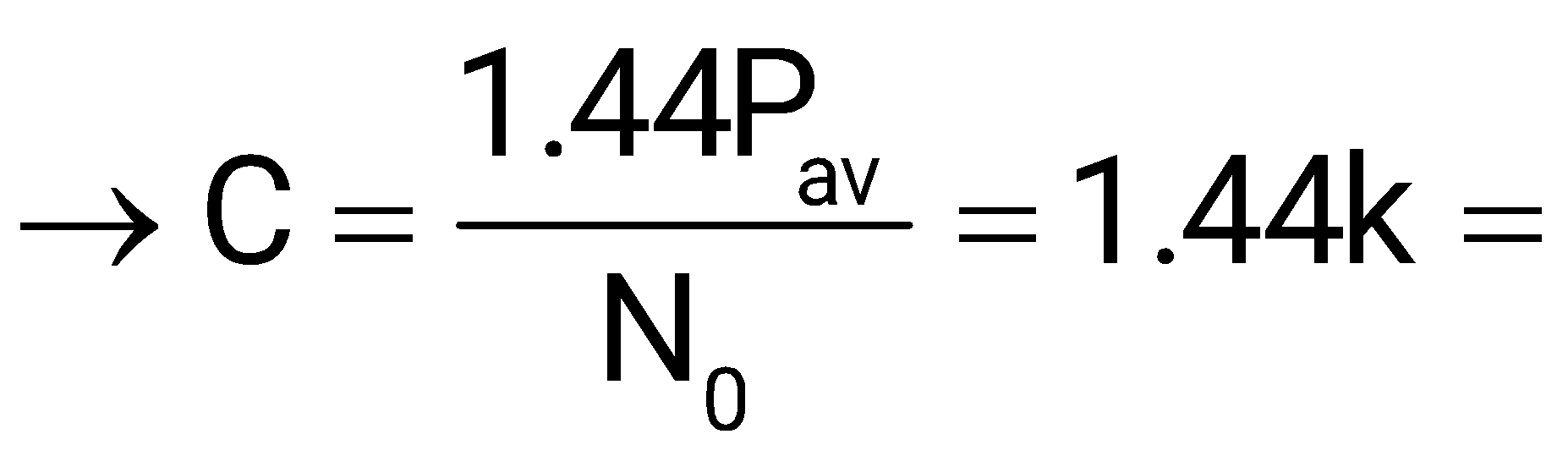

By L-Hopital's rule constant

Since only (A) matches the limits, (A) is the correct graph

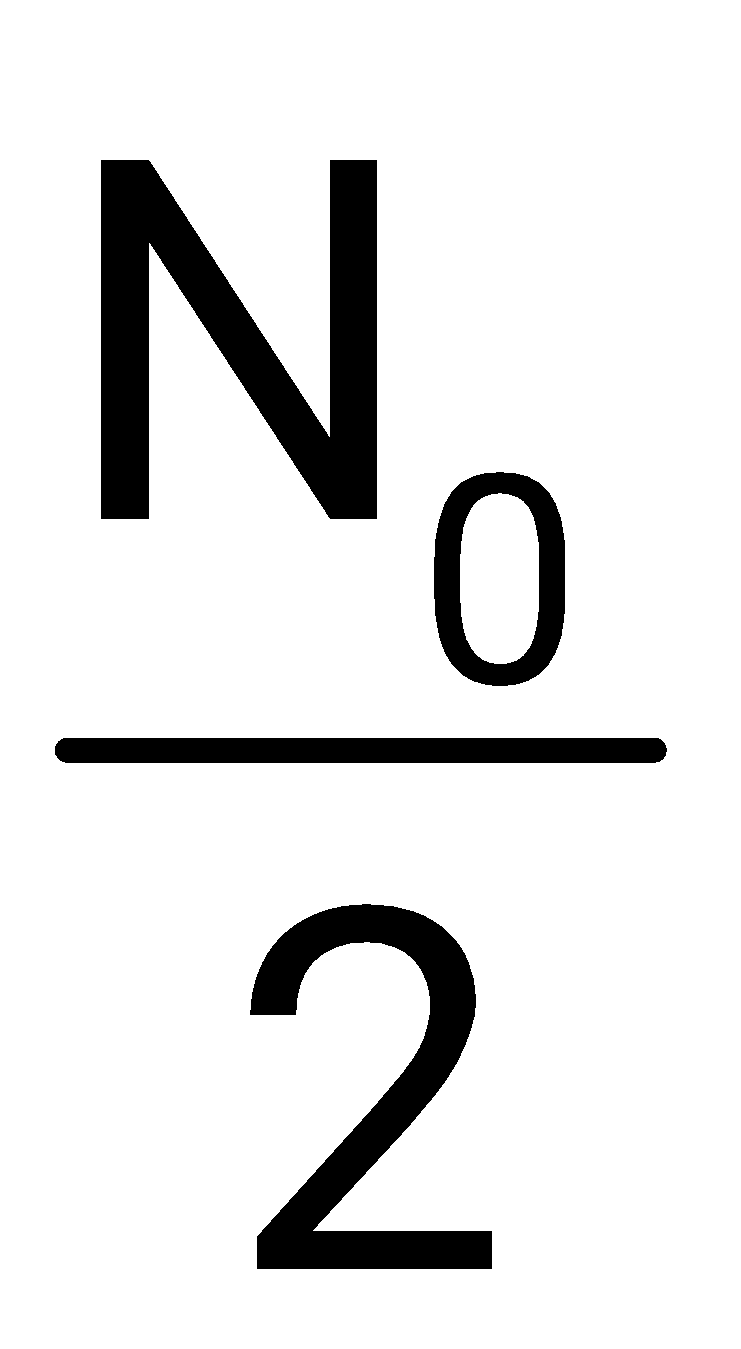

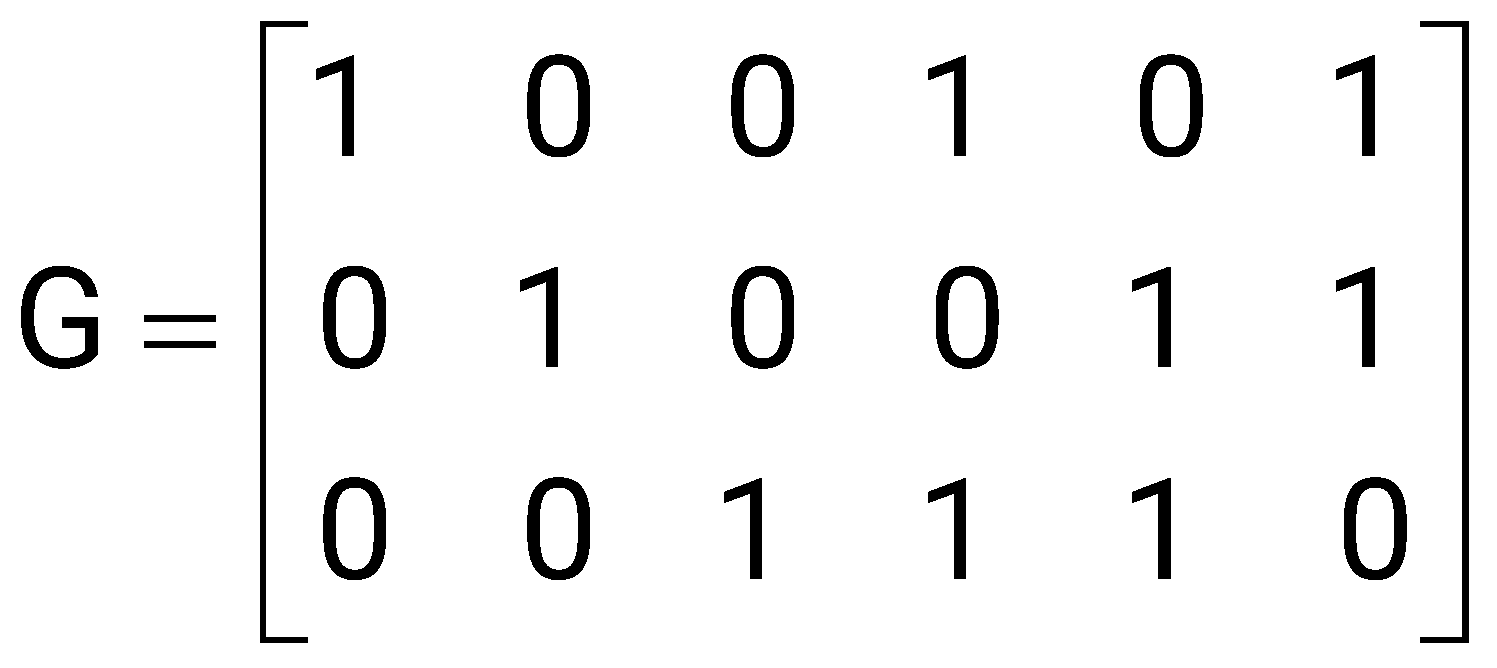

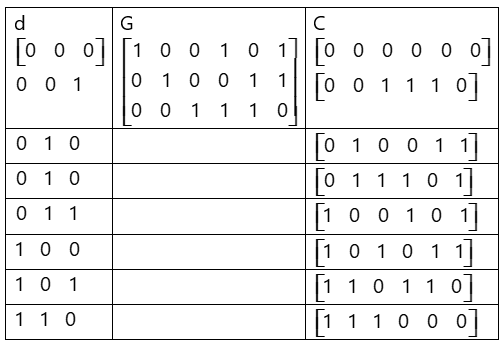

The generator matrix of a binary linear block code is given by

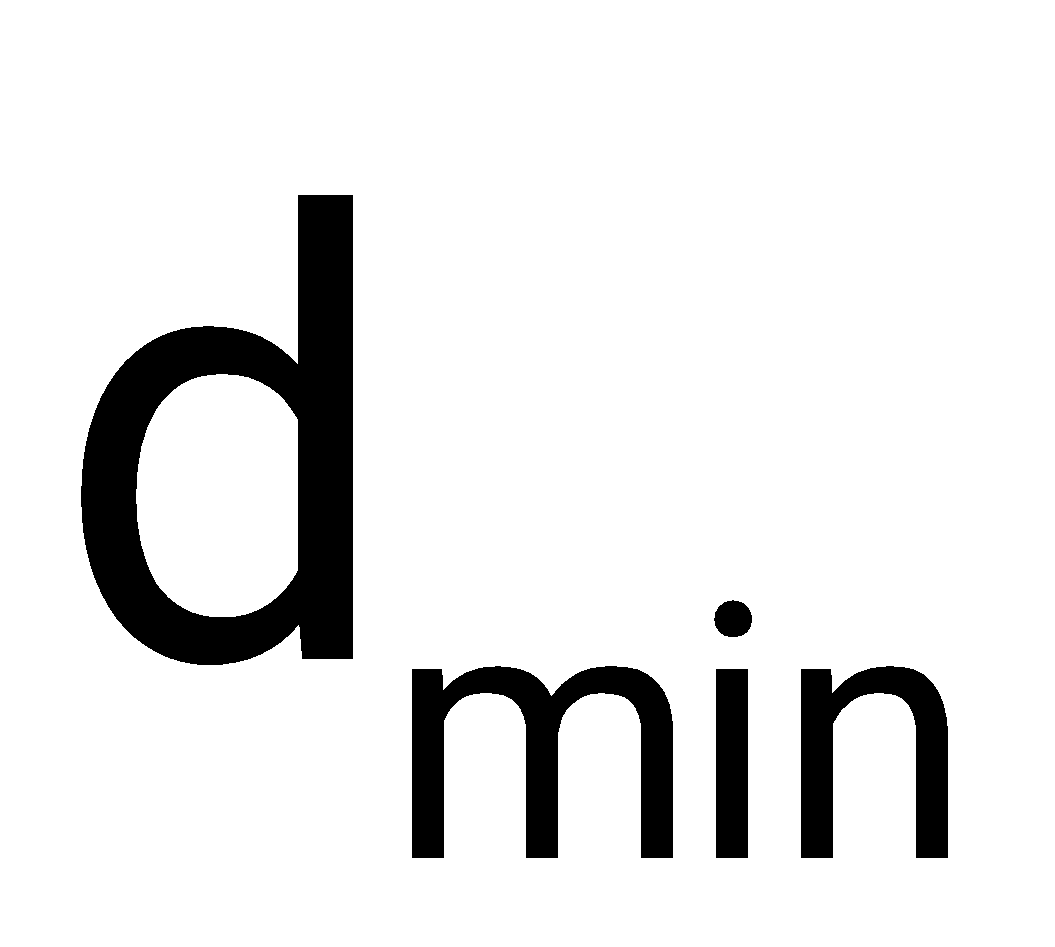

The minimum Hamming distance between codewords equals _________ (answer in integer).

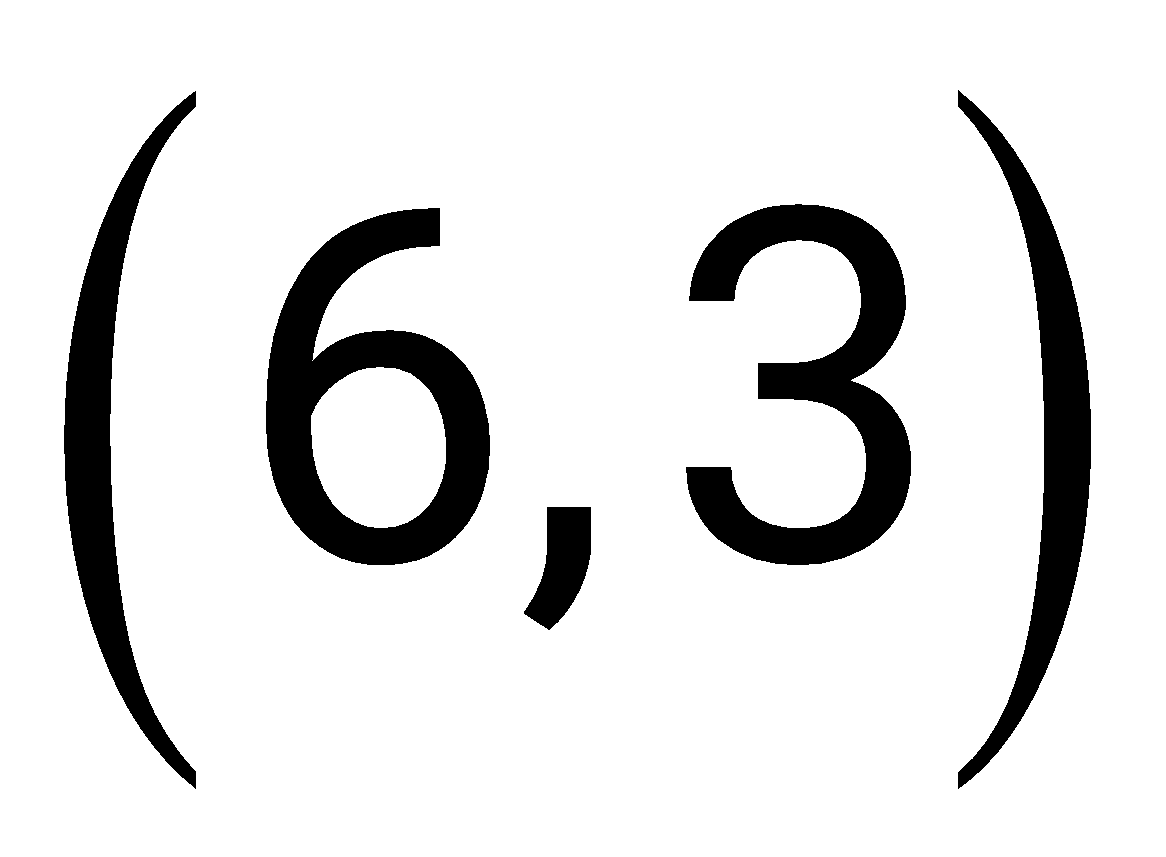

Given code (n,k) = (6,3),

Where n total bits, k message bits

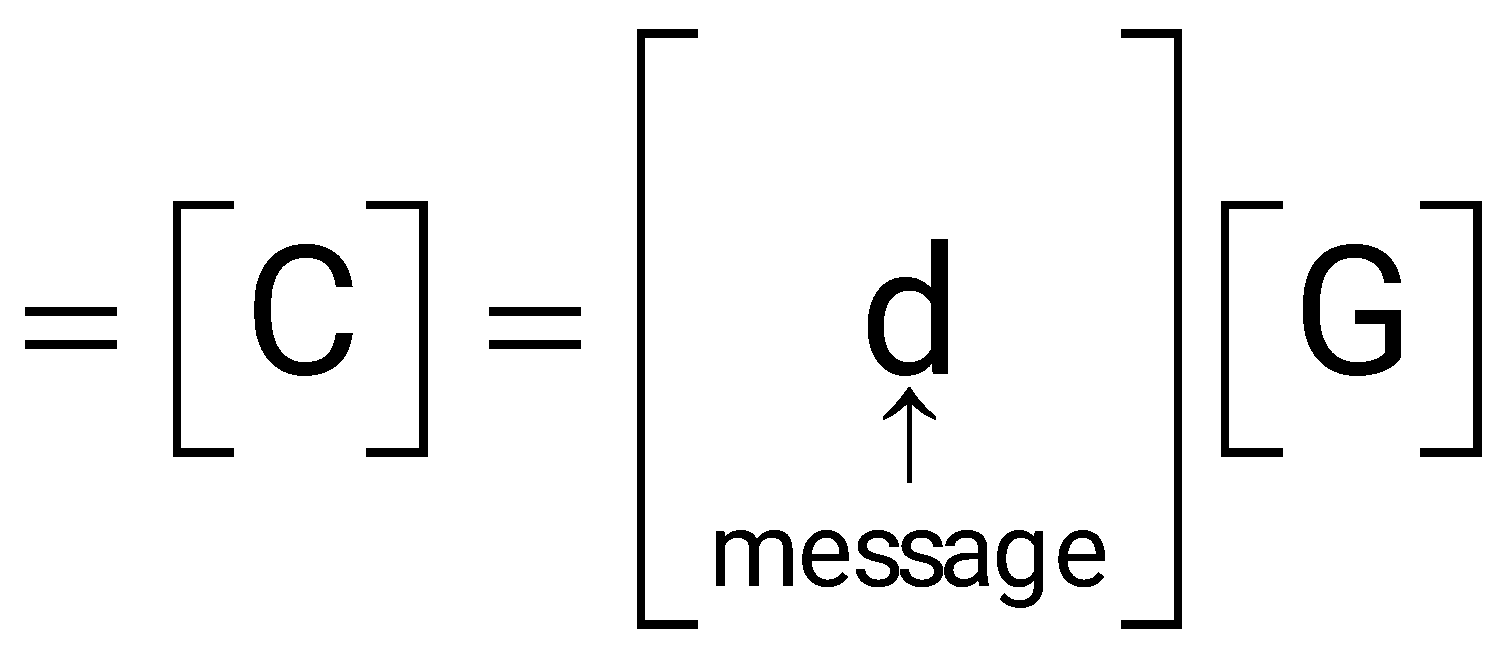

Corresponding codeword

d ranges from 000 to 111

C values by matrix multiplication

smallest homing weight (no. of non-zero bits) of non-zero codeword

=minimum Hamming Distance see column

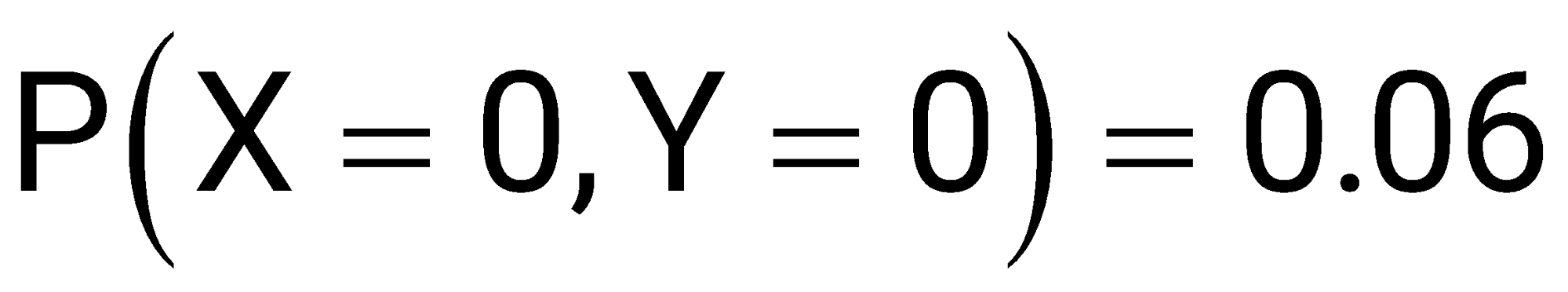

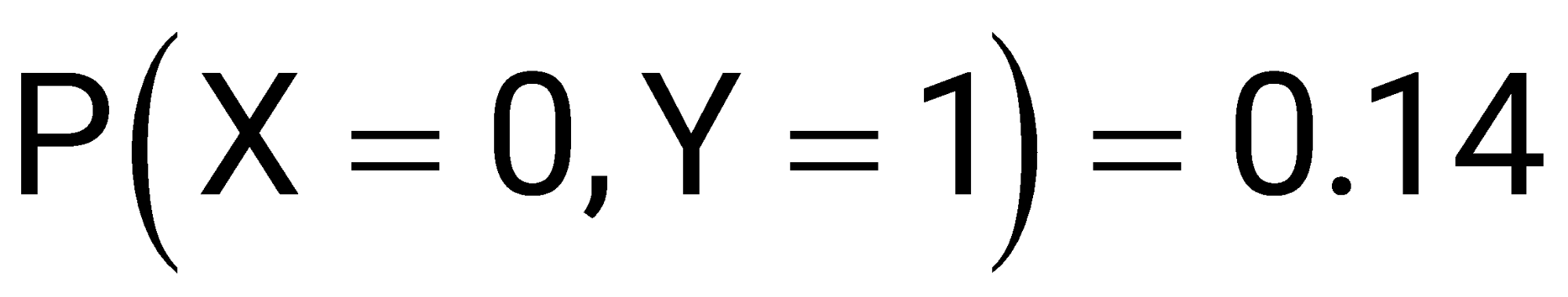

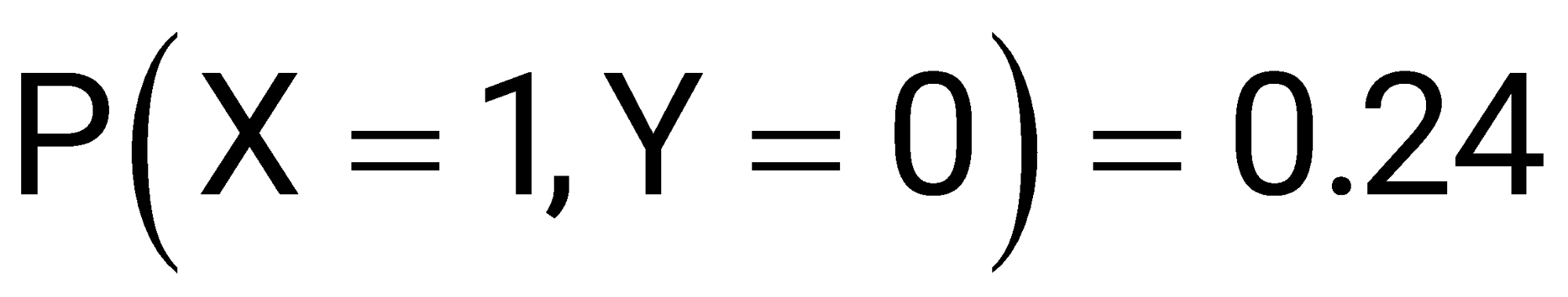

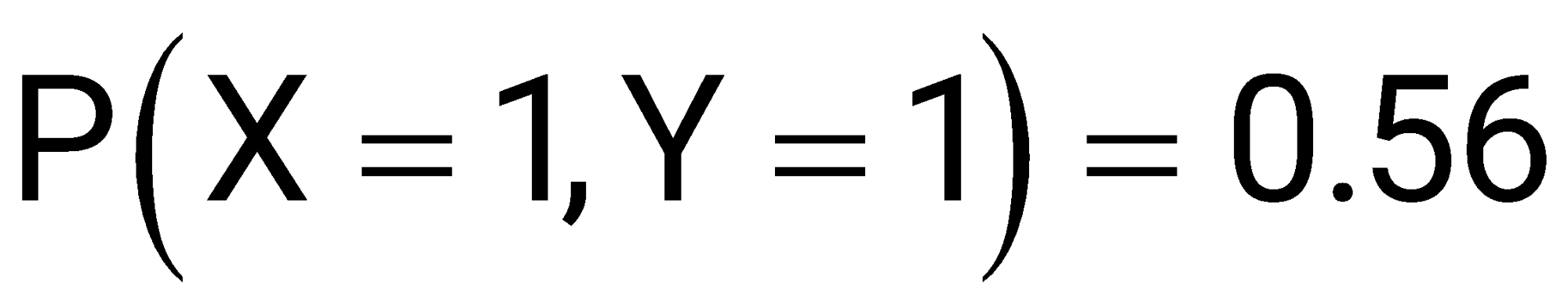

and are Bernoulli random variables taking values in . The joint probability mass function of the random variables is given by:

The mutual information is _________ (rounded off to two decimal places).

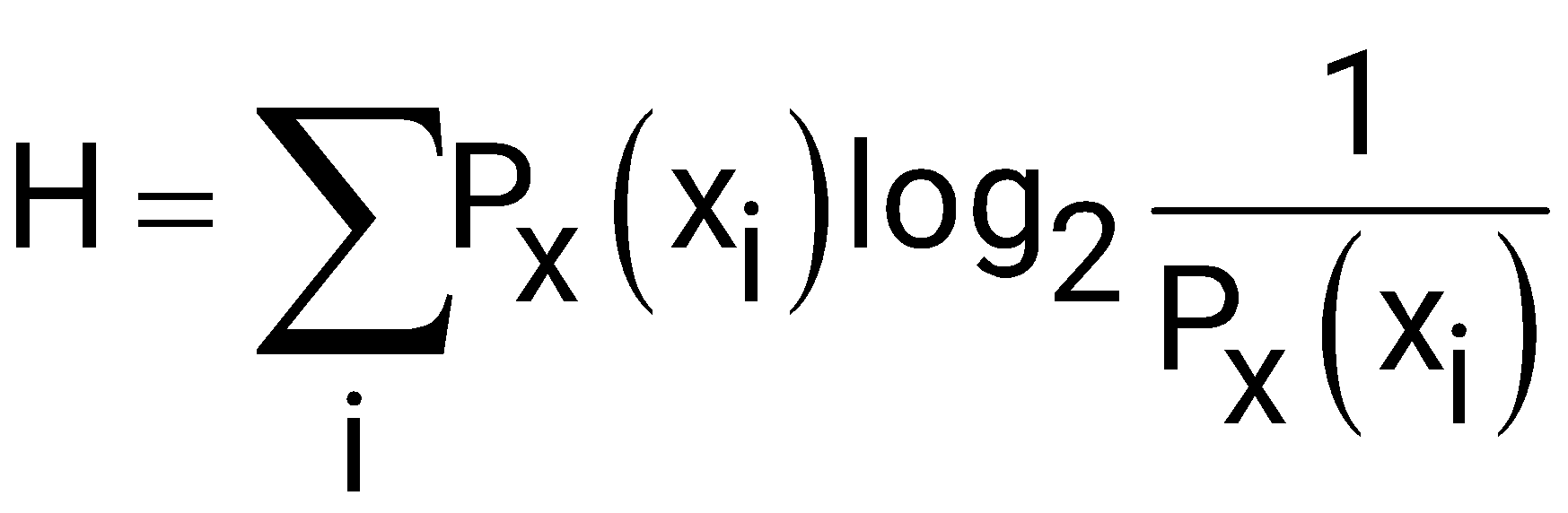

The mutual information I between two random variables and is defined as:

where Joint Probability Mass function: of X and Y.

, Marginal Probability of

Marginal Probability of

Given:

Marginal Probabilities and :

The marginal probability of and :

The marginal probability of and :

= 0+0+0+0

= 0

Alternate Solution:

are Bernauli Random variables,

they are independent.

Thus,

A source transmits symbols from an alphabet of size 16. The value of maximum achievable entropy (in bits) is _________.

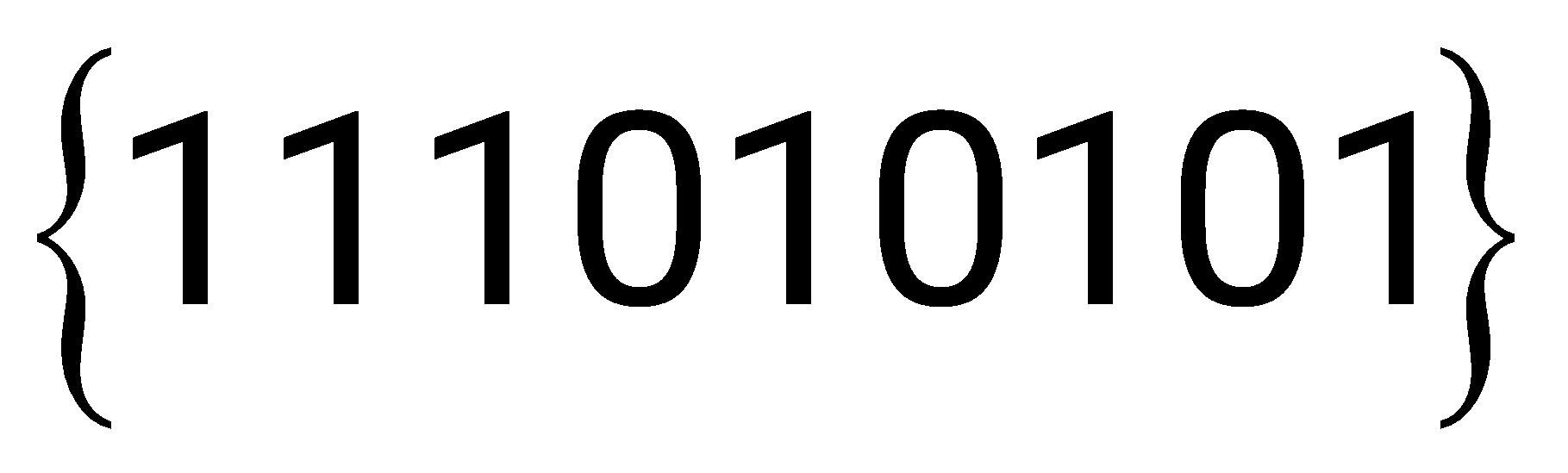

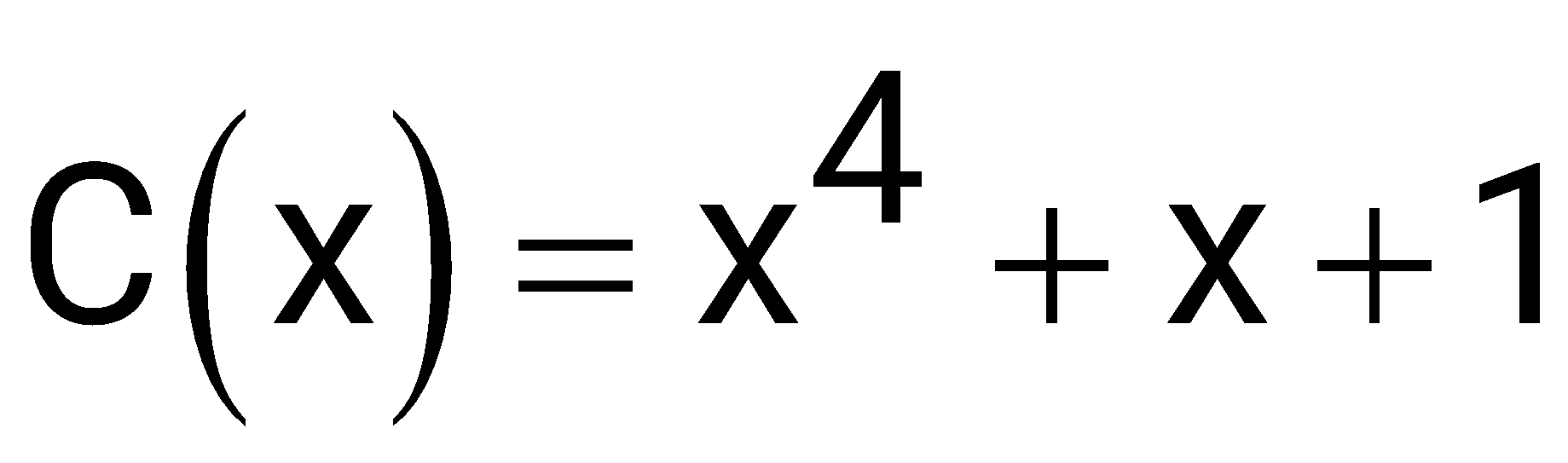

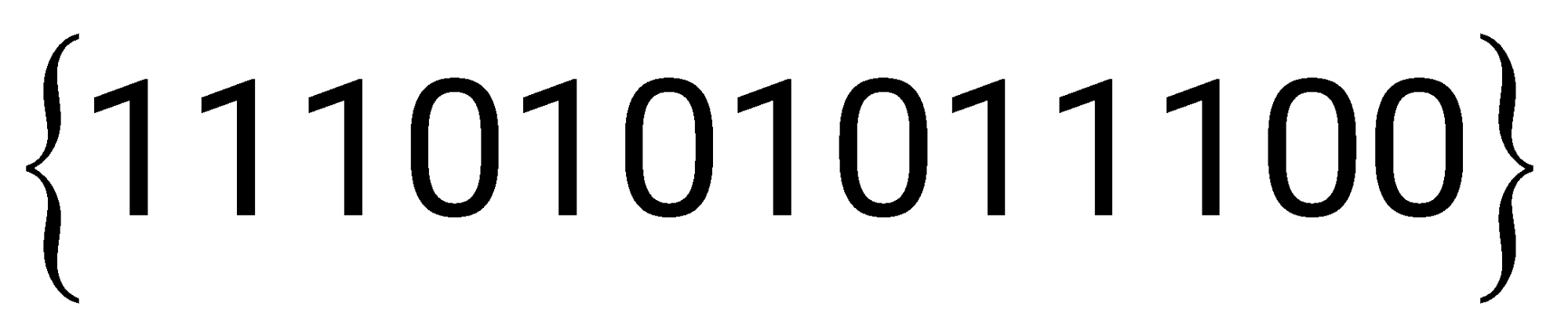

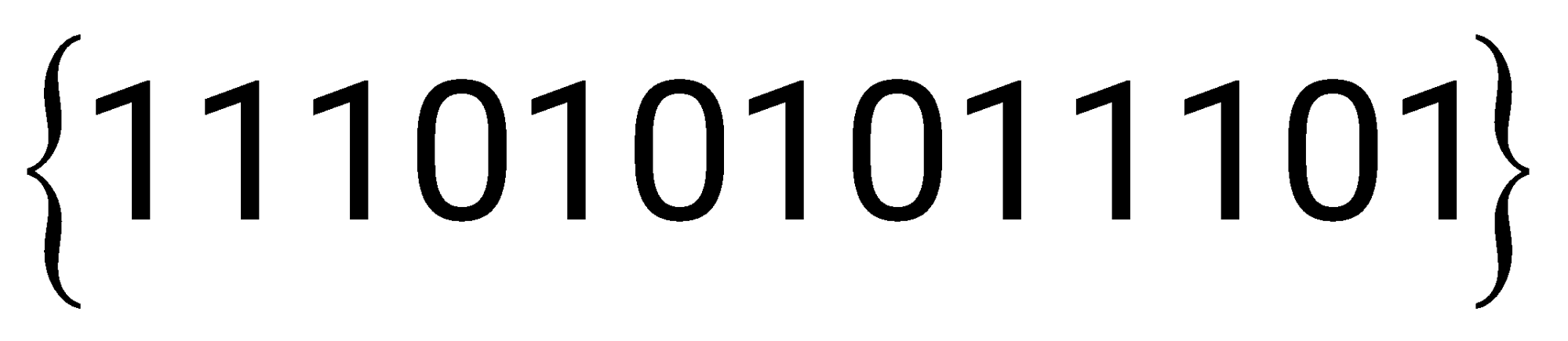

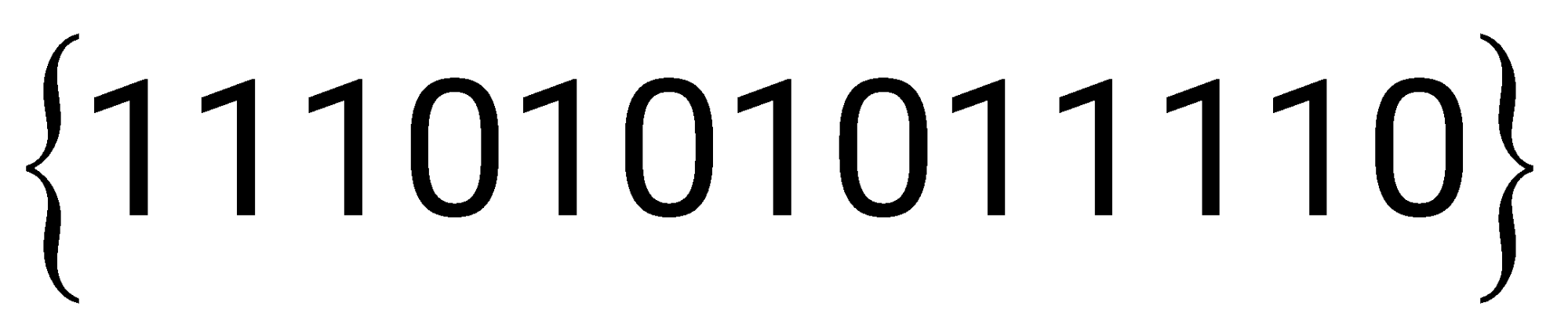

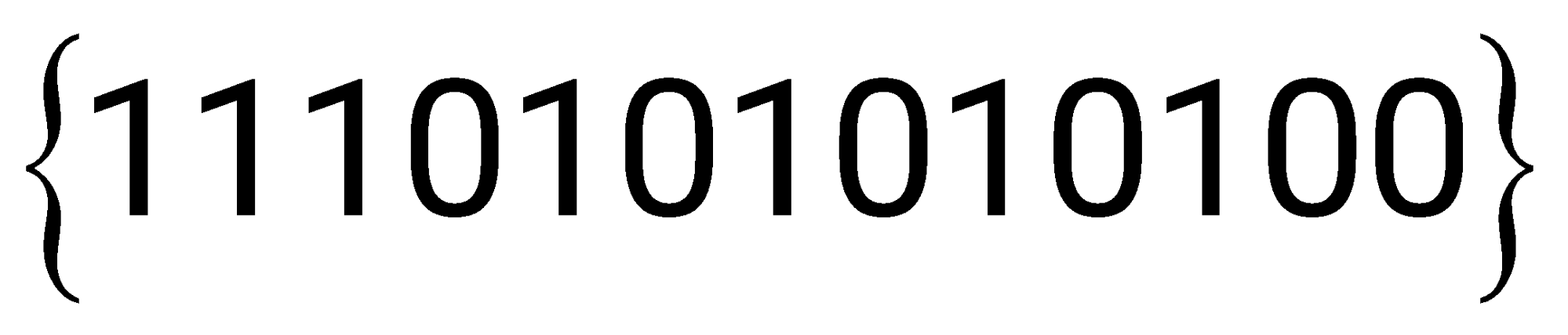

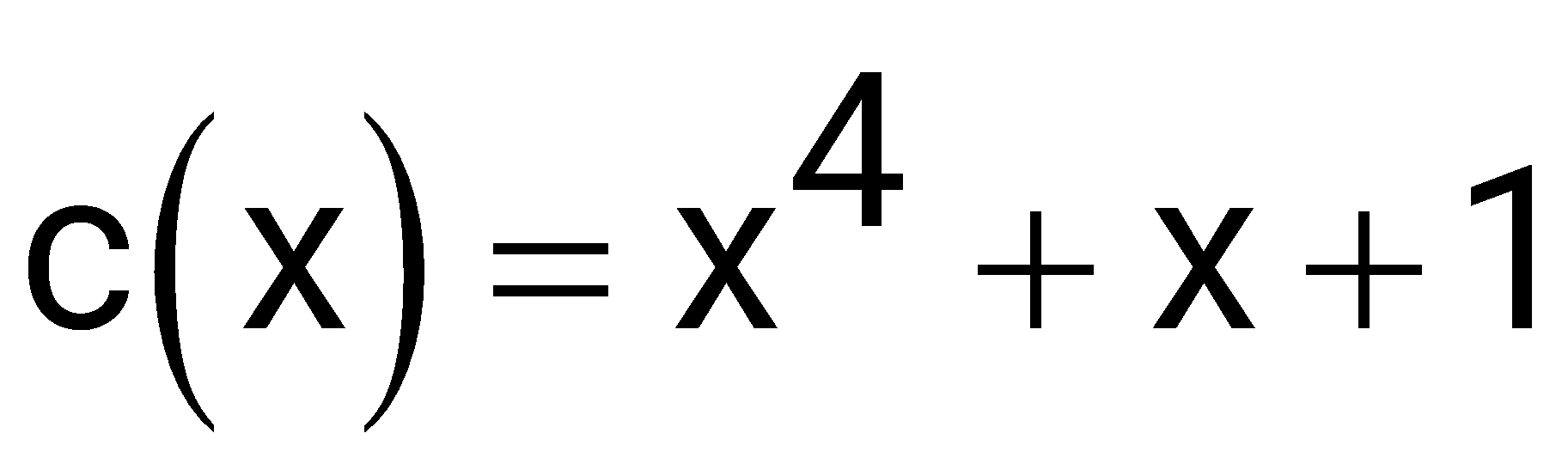

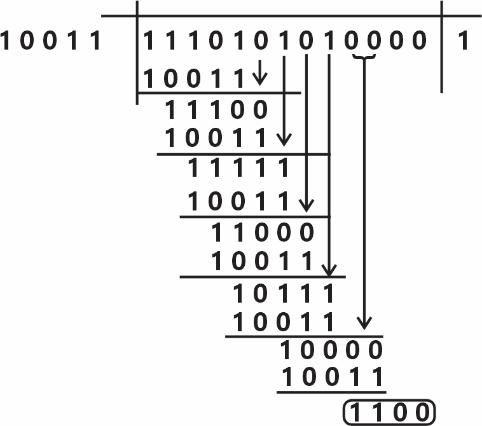

The information bit sequence is to be transmitted by encoding with Cyclic Redundancy Check 4 (CRC-4) code, for which the generator polynomial is . The encoded sequence of bits is ___________.

Given information bit sequence:

Generator Polynomial

Maximum power of generator polynomial is 4.

So append 4 zeros in d

d=11101 01 01 0000

Replace last 0000 in d with 1100

Encoded sequence = 11101 01 0111 00

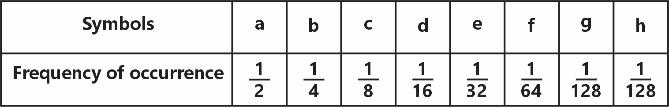

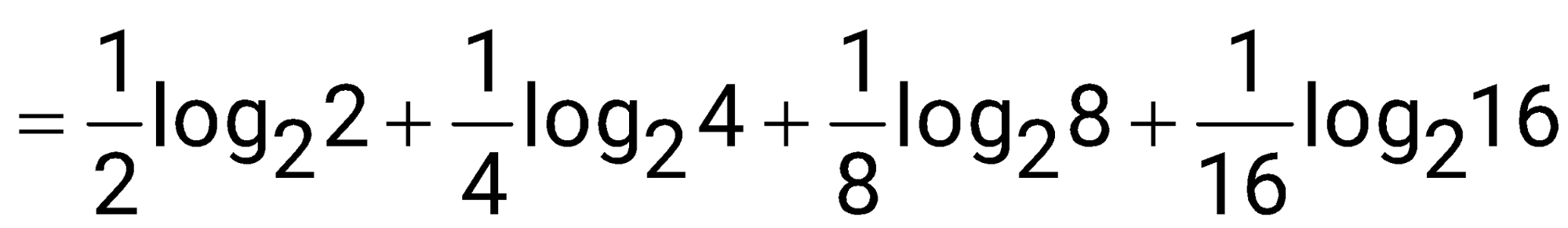

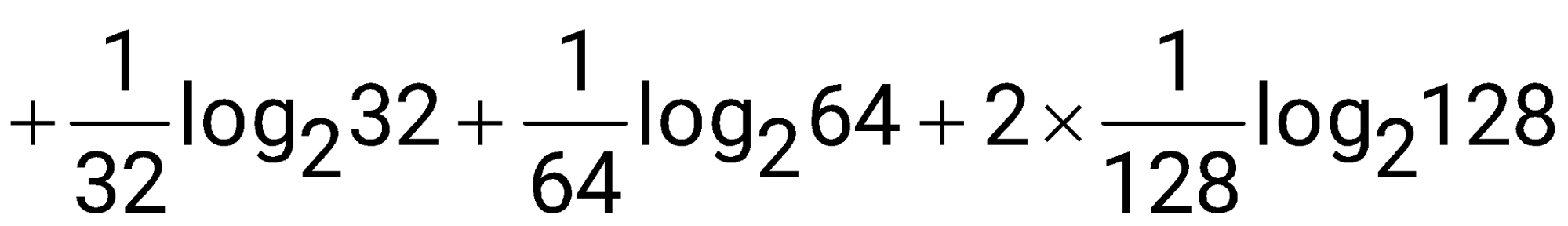

The frequency of occurrence of 8 symbols (a-h) is shown in the table below. A symbol is chosen and it is determined by asking a series of "yes/no" questions which are assumed to be truthfully answered. The average number of questions when asked in the most efficient sequence, to determine the chosen symbol, is _______ (rounded off to two decimal places).

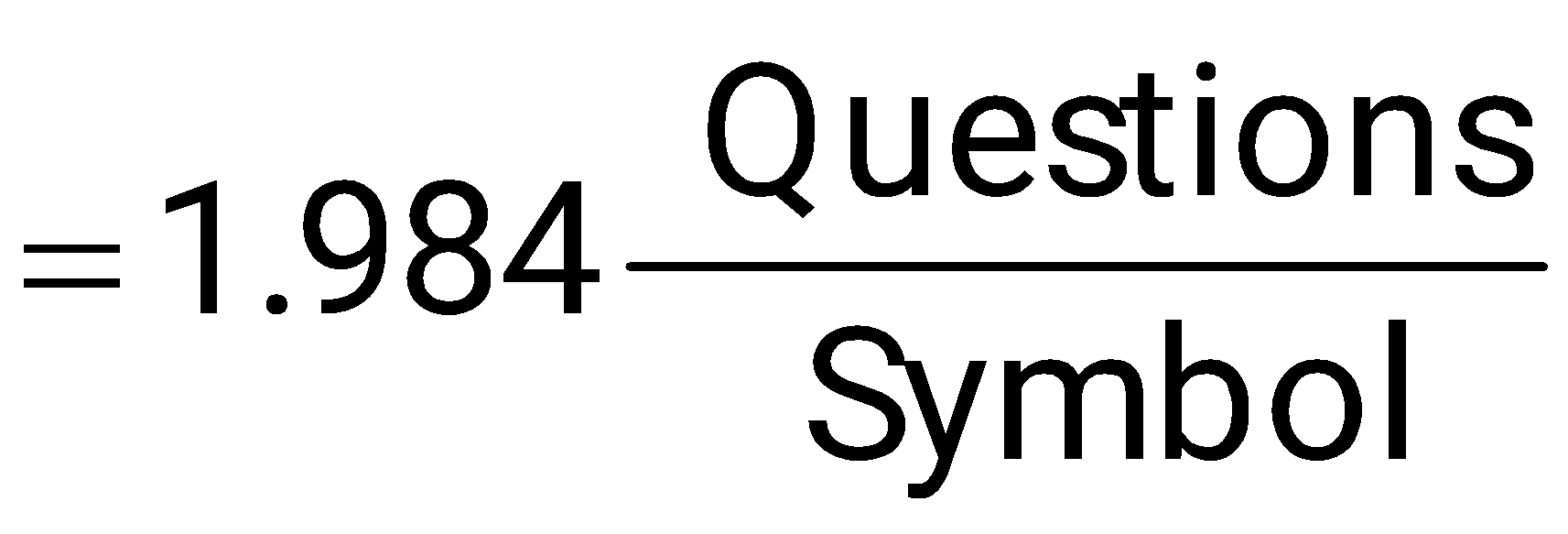

The average number of questions when asked in the most efficient sequence, to determine the chosen symbol possible number of questions per symbol